New Systems Development

Ventureaxis' New Systems Development service encompasses frontend development, system backends, and database solutions. Utilizing efficient DevOps processes and optimized methodologies, we ensure a seamless, practical approach from start to finish.

ERP Process Deployments

We specializes in ERP Process Deployments, offering expert integration of eCommerce solutions like nopCommerce, Dynamics/Microsoft 360, and Shopify, tailoring these platforms to streamline and enhance your business operations efficiently.

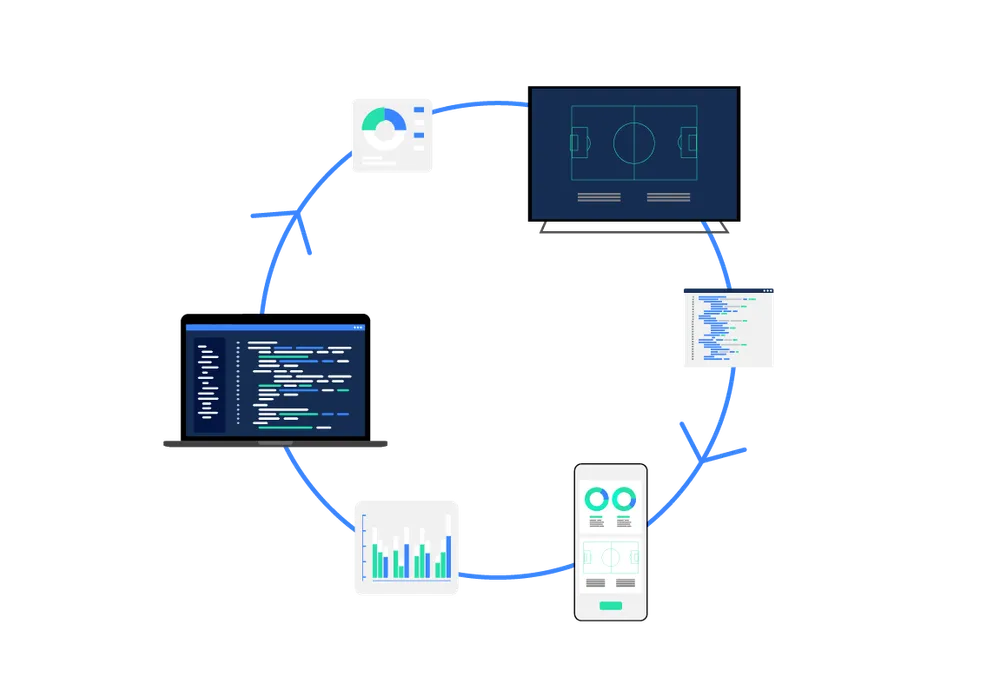

Integration and Data Management

The critical need for businesses to maintain accurate and easily accessible data across various platforms and applications is paramount. Our team excels in addressing diverse integration requirements, ranging from real-time ingestion and processing of data for broadcast graphics to batch loading of CSV files for financial transactions.

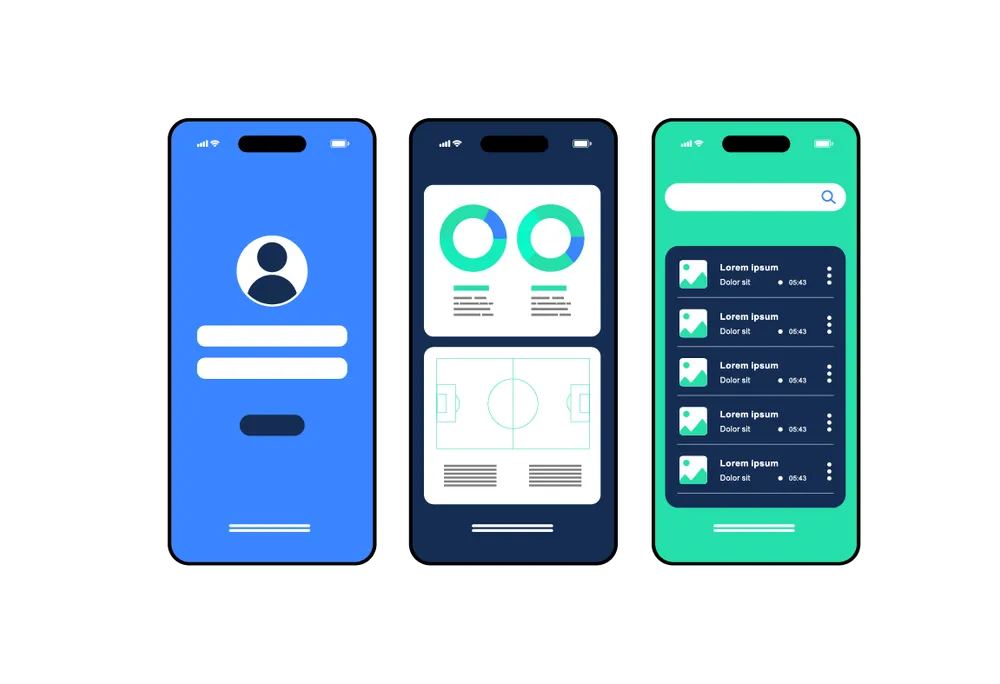

Mobile App Development

Ventureaxis excels in mobile app development, with a skilled team proficient in native technologies like Kotlin and Swift, and hybrid platforms including Flutter, React Native, and Ionic. We cater to all your mobile application requirements to deliver first class user experiences.